Gene Scheurer is co-founder and CEO of Optimum Healthcare IT of Jacksonville, FL.

Tell me about yourself and the company.

Jason Mabry and I started Optimum Healthcare IT in 2012. I had started CSI Tech, which we sold to Recruit in 2010, so we put the band back together in 2012. We were primarily focused on EHR implementations and rode that wave. We were #1 in KLAS in multiple overall services for a couple of years. We grew the business exponentially during that time.

We spun off Clearsense in 2016. That’s a data aggregation, data platform play. I went over to Clearsense in 2018 on a full-time basis to be the CEO. I promoted Jason Mabry and then Jason Jarrett into the CEO role at Optimum. I’ve been at Clearsense since. We signed some of the largest healthcare systems in the country as a data platform company. UPMC is a client and investor, Cleveland Clinic, Trinity, CommonSpirit, et cetera. We grew that business and started getting into the payer market as well.

As we made the transition to more of a product company and going into the payer market, I came to the realization that that isn’t really my strength. I went to the board nine months ago and told them that it was time for me to pass the baton and for them to find a CEO who has a product and technical background and payer experience. We did that in January of this year and now I’m back at Optimum as CEO on a full -time basis. I was always executive chairman at Optimum, so I had kept close to the business.

In 2020, we sold a majority interest to Achieve, which is an educational fund out of New York. We weren’t necessarily looking to sell, but their business model is to tap into their network of university partners. We created CareerPath, where we take kids out of college or with one or two years of operational experience and put them through a six-week healthcare IT curriculum and certification that is operated by CHIME and is exclusive to Optimum. They get a healthcare IT certificate through CHIME and then we put them on a track to get certified in Epic, ServiceNow, or Workday. We also do project management and BI.

It was a good opportunity to add talent into the marketplace from a supply and demand perspective and to lower costs to our customers by offering up-and-coming resources. We can reduce those pay rates to those individuals and ultimately pass that along to our customers.

After we did that transaction in 2020, we did an acquisition of Trustpoint Solutions, which focused on cybersecurity advisory. Then we added ServiceNow and cloud migration services and earned Workday certification.

How do you deploy both experienced resources as well as those you have developed through CareerPath?

We still do Epic implementations and we have 600 employees, so we still are providing opportunities to those consultants who have been in the ecosystem and have experience for 10-plus years. The CareerPath model, with more junior resources, was an option to offer a hybrid. We can marry highly experienced team leads with junior-level resources from an analyst perspective, and they can do some mentorship. It’s a way to drive down implementation or optimization costs for our customers while creating a new talent pool for ServiceNow and Workday.

What areas do health systems need help with?

We have new clients that are doing new Epic installs and there’s a lot of Cerner to Epic migrations happening. We also have some Meditech to Cerner customers that are doing new implementations. We have opportunities for new installs, training, go-lives on new-new implementations, and optimization.

A lot of healthcare systems have financial headwinds. They have made a large investment in the EHRs of their choice. They are optimizing those to make them as efficient as possible. We are asked to do a lot of workflow redesign and revenue cycle projects.

On the ServiceNow side, we have become the only Elite partner in healthcare. They are looking more from a vertical perspective right now. Healthcare is so nuanced in terms of understanding the landscape and workflows and everything that goes into healthcare. It’s probably one of the most dynamic and involved of all the verticals that they have, so they love the fact that we are healthcare focused. We bring to bear a lot of advisory around the healthcare landscape in conjunction with the ServiceNow and Workday implementation.

Where are we in the always-swinging pendulum between healthcare outsourcing and insourcing of IT and revenue cycle?

We are seeing a lot more insourcing, as opposed to outsourcing. Those cycles always change, but within our customer base, we are seeing a lot of insourcing, coming back into the revenue cycle space. That is good for us because we can partner with our customers to help them bring in consultants to stand up those revenue cycle initiatives as they get operationalized and then get to a steady state.

Are you seeing any impact from AI?

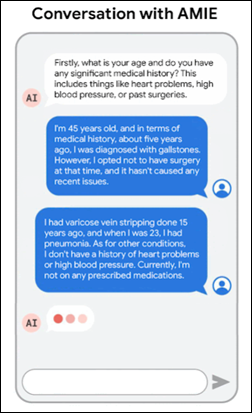

The software partners that we’re working with – Epic, Workday, and ServiceNow – have incorporated AI into their software and technologies. Everybody is using AI to some degree, and it’s typically use case specific. It’s a lot of buzz, but as it matures, you’ll start seeing AI’s true ability to demonstrate real results. A lot of it is embedded into the software, behind the curtain in a lot of ways, and people are using it one way or another even if they don’t realize it.

AI will drive efficiencies to your customers where they are becoming reliant upon whatever technology is within their ecosystem. Companies are learning how AI can make their software products more valuable to their customers.

Going back to your comment about preferring to run a services business rather than a product business, how do the required skills and abilities differ?

The services business is more transactional in nature. It’s more volume. You can create value through a different lens on the services side, because any services business is in professional services and it’s providing human capital. You need policies, procedures, and enablement for your consultants to thrive within a given customer. They need to feel supported, so there’s a high touch, high customer service aspect to it. You’re working with human capital, so you want to make those people feel valued.

That also drives how your customers and clients view Optimum and our competitors. It’s how you handle situations when things don’t go exactly according to plan with a particular consultant, They have lives. Things happen. They have families. A particular consultant might be going through something that doesn’t allow them to perform at their best, or they have issues where they can’t be on site.

While you are dealing with employee or consultant issues, you have to offer white glove service to clients. You make sure that the client understands that we will do everything we can to backfill someone who doesn’t work out. The onus is on a professional services company to have a system in place that supports your consultant so they can do the best job. They shouldn’t have to be worried about whether their expenses are going to get paid or payroll will be on time. Then, do they have a support system and mentors internally?

In comparison, on the software side, you are selling a multi-year, enterprise-wide data platform product. That sales cycle can take eight to 12 months. It’s very much a consensus-driven sale because you are touching multiple stakeholders within the healthcare system. You need to get the buy-in from the CIO, CFO, CMIO, and sometimes the CEO. There are multiple sales points.

The other side of it is delivery. Once you sell the deal, the difference is on the delivery of professional services. You are reliant on the human capital and their knowledge base.

On the technology or SaaS data platform side, you are reliant on the technology. That incorporates a wholly different set of challenges and people that you are working with. I’m not technical even though I started the business. I still have a great team over at Clearsense. When technical issues hit my desk, I don’t have an answer, and that’s a humbling experience. Whereas on the professional services side, I’ve been doing this so long that even if I don’t have all the right answers, I have a good idea of how we should overcome certain challenges within the business.

What factors will impact the company’s strategy and performance over the next few years?

Growing those emerging service lines. We have a history of great delivery on the EHR side of the house and we are proud of that. With Jason and me coming back, we are infusing a culture of celebrating our wins, us against the world, and driving the type of growth that Optimum had in the early days. We are demonstrating that through high quality work, great relationships, and accountability.

We are proud to have become an Elite ServiceNow partner in a short period of time, and we are excited about the growth of that business.

We earned Workday certified partnership late last year and we are in the infancy stage of kicking off that practice. We hired our first practice director. Workday’s presence in healthcare is similar to Epic’s back in the day, with great software. They are starting to verticalize their offerings, having specific healthcare-focused salespeople and enablement, and looking at healthcare differently than their financial services customers.

On the cloud side, we are an AWS certified partner and a Microsoft partner. We are building that capability, whether it’s moving your DR to the cloud or moving an Epic instance to the cloud. We did the first one with Baptist last year, and we’re touting that as a use case.

We will be able to cross-sell into a healthcare system with these multiple offerings, essentially becoming more of a digital transformation professional services organization than an EHR implementation company as we have historically been.

It’s important for us to make that transition and stay relevant in today’s ecosystem with the technologies that our customers are using. We want to be able to help them through the process of being successful with their implementation of those products. We need to give our message concisely, understand how each type of software impacts the other, and make ourselves a vendor of choice for our provider clients, a trusted partner, whether it’s Epic, Cerner, Workday, ServiceNow or cloud migration.

I've figured it out. At first I was confused but now all is clear. You see, we ARE running the…