HIStalk Interviews Cyrus Bahrassa, CEO, Ashavan

Cyrus Bahrassa is founder and CEO of Ashavan.

Tell me about yourself and the company.

I’m somebody who would not have imagined being here talking to you. When I was a kid, healthcare IT was not on my radar. I’m not sure if anybody really does have it on the radar. I wanted to be a commercial airline pilot. In college, though, I found a passion for education. I thought I would be a teacher and eventually a principal or superintendent. But then a little company called Epic reached out to me in my senior year and said, you probably haven’t heard of us, but you should check us out. I did and I liked it enough to join the company, thinking I would be there just a couple of years. Instead, I stayed for seven, and somehow have been in the industry for my entire career.

Ashavan is a healthcare interoperability consulting company, or at least that’s what I tell people. But truthfully, that’s not the full story. Fundamentally, we are a company that is focused on choices. Our mission is to make the best choice the easiest one, and everything that we are trying to do is in service of that mission. Today, we’re focused on interoperability, and that’s going to be our focus for a while. But the pie-in-the-sky vision is that 50 years from now, we are helping people and businesses make optimal choices in other areas, things like sustainability and money. When we can make it easy to do the right thing, that’s where I would love or Ashavan to play a role.

Are the big non-healthcare companies surprised by the complexity of getting and using healthcare data?

Yes, especially the ones that are newer to healthcare. Maybe they have experience in technology in other ways, but they’re jumping into the healthcare side and saying, why is it so hard and complex and convoluted? At the end of the day, it feels like a jungle. That’s what we see and hear from our customers. We are helping them carve a path through the jungle and helping them understand that this is the right way to go about this. You will be able to move as efficiently and practically as possible.

That doesn’t mean that it will be easy. It will still be hard. We can’t claim to have magic bullets that make everything push-of-a-button automatic, but at least we can help you move faster and more efficiently and have that certainty and that clarity that you might not have if you didn’t have that guide with you.

What is the incentive for a software vendor or provider to share information?

It’s a hard question to answer, because the incentives probably aren’t as strong as they could be. What I would preach in idealistic fashion is that we have to look at this from the perspective of customer service and doing the right thing for people and for their health. Sharing data and providing greater interoperability is an important piece of that. Do those entities have a vested interest in those things? Probably not, unfortunately. I hate to say that, but the reality is that they may not maximize their individual potential when we’re working in service of that common good. But that is a North Star that we have to have.

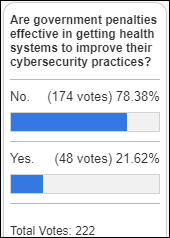

Unfortunately, that’s why government plays such a big role in healthcare IT and in interoperability. Sadly, the pace of innovation and interoperability is mostly dictated by the pace of change from the government side. You have USCDI, for example, and that’s a great thing. It allows for a certain floor in terms of API-based interoperability and retrieval of data. But that floor has remained stagnant for a while. It’s been at the Version 1 of USCDI for a few years now, and it won’t be until 2026 that that floor moves up to Version 3. Then it won’t move again until the ONC decides to make that change at some point in the future, which of course is dependent upon the circumstances in the industry, the political circumstances, and what kind of administration is in power. The challenge here is that we are working at the pace of government.

Where does the patient fit in?

The patient plays a huge role. First and foremost, they absolutely have a right to their records. They have a right to obtain records when they have the appropriate authorization for a family member. That is super important and something that has to be enforced strongly.

I look at it like personal finance. We probably need to teach people to a level of literacy as they are growing up and as they are in young adulthood to help them be better healthcare consumers. More and more states are mandating education around personal finance in middle school and high school. Having individuals be knowledgeable of what their rights are, understanding the importance of having access to their data, and helping them understand the value of that access and that data exchange in practical terms.

When I explain interoperability to folks who don’t really know about it, I talk about smartphones. Imagine that you’re an Android owner, but you want to switch to an IPhone. You love everything about the IPhone, you can’t wait to switch, but then you find out that there’s no way to get all your photos, contacts, and messages pulled over automatically onto your iPhone. At best, you’ll have to manually key in everything, or at worst, you won’t be able to move some stuff at all.

That would be really, really bad interoperability. Thankfully, we don’t have that, but that’s a practical example of the power of data exchange and interoperability. Then, helping someone understand in the context of healthcare why that’s significant. You want to be able to switch your doctor, you’re moving to a new location, or you’re traveling and you need care in a new place. Having the ability to move that data and make things seamless, easy, and powerful is so important.

Apple Health was all the rage when it was announced, even though Google and Microsoft had failed in trying to do something similar. Has the uptake of Apple’s health-related capabilities proven that demand exists for patients to manage or transfer their own record?

There was a moment where personal health record apps had this big shining glow around them and everyone was pretty hyped about their potential. They are important, don’t get me wrong, but it’s this problem in healthcare and interoperability where you can get the data, but then how do you use it? It’s one thing to have it there, but it has to be usable and actionable.

Allowing patients to pull their data through Apple and through other services is wonderful, but do they have that literacy and empowerment to use it? Do they have the ability to connect it somewhere else so that they can switch to a new provider or switch to a new location and have that data be equally understandable and actionable?

The ability for these personal health record apps to pull in that data, showcase it, and surface it to the patient is good for them and for their ability to navigate the healthcare ecosystem. But some folks are not going to pay attention to it as much, especially if they are relatively healthy or their health stays pretty constant and they don’t necessarily think about healthcare very often. Some folks, again, are just not going to have that literacy or that capability to be able to work through that. We have to do things as an industry, as a society, to help them navigate that better, to empower them, and to safeguard them.

My last thought here is that a lot of folks don’t understand what HIPAA truly covers and that some of these digital health applications — unless it’s as a business associate of a covered entity – aren’t subject to the HIPAA requirements. I’m not sure that most people will ever understand that. That means that it’s even more important for us to craft the laws and regulations that provide a suitable level of privacy protection so that the protection of their information is automated, no matter who the holder of it is. Again, this is all about choices and making the right thing the easy thing. If we can update those laws so that your information is protected in all these different situations and apps, that’s better for everyone.

Is the answer to update HIPAA or to implement general privacy protection?

It would be pretty comprehensive. I say that because of a couple of factors. What will prompt some sort of action from Congress, whether it’s in two years or 20 years, is these challenges around social media now also AI and the way that our data is used and monetized. I see that as being a big driver for some sort of action on privacy legislation.

Unfortunately nowadays, there’s a tendency for Congress to take a long time and then do this big sweeping package when the time presents itself. I can see them getting to this point where privacy legislation is going to happen, and then healthcare and healthcare apps become a component of that larger bill. Like a lot of humans, I’m terrible at predicting the future, so I could very well be wrong and I’m going to be willing to admit that, but that’s what I see.

As someone who has worked both for and with Epic, do you see them as an interoperability friend or foe?

I see them as simply a self-interested player in the market, just like any other entity out there in our industry.

I believe a few things. Epic has a lot to offer when it comes to interoperability. They and Athena are at the forefront in terms of the different options you have and capabilities that you have for integrating with them, whether that’s HL7 interfaces, both FHIR APIs and proprietary APIs, Kit, etc. They have lots of available options that several other EHR vendors cannot claim to have.

At the same time, Epic is guilty of certain practices that are either common in the industry or that are unique to them. The common thing is high fees. I’m a big believer that we have to crack down on the fees and just charge for the cost of interoperability without making it a profit center, but simply a pass-through of the costs.

I also think that we need to address things like exclusive marketplaces and exclusive programs. You have the Epic Vendor Services program and Epic on FHIR, but you also have through Cerner what is now their Oracle Partner Network, which used to be their code program. You have the Athenahealth Marketplace. Similar to the Apple App Store and Play Store, those are the exclusive venues that you must go through to publish an app and use it with the particular EMR. I think that’s wrong. You should be able to have additional marketplaces. You should be able to pull down apps and list apps in multiple places.

Those are the general things that I would say for Epic. A specific thing that I was concerned about was their Partners and Pals program. I spoke out about this several months ago when it was first announced. To me, that was the wrong move, because it at least implied — and no one ever denied this — that Epic was providing exclusive integration options to certain Partners and Pals that would not be generally available to everyone. I think that’s wrong and anti-competitive. For interoperability, the same capability should be available to all entities at the same time and at the same cost. That’s an important piece, because interoperability goes hand-in hand with competition, with a freer market, with allowing people to have better choices and to minimize the switching costs between those choices.

AI companies are desperate for data and will likely bring their own ideas about interoperability. How will that business need influence the technical side of interoperability?

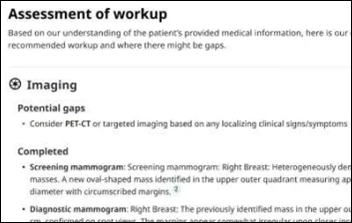

It will increase the demand for sure. The key challenge is that when we’re talking about healthcare data, it doesn’t all live in just EHRs. We’ve got the ONC’s certified health IT program to ensure that these EHRs have this minimum level of data. Certainly they are a wealthy repository for that, but you’ve got data living in all kinds of other systems, whether it’s an uncertified EHR, a behavioral health system, a system used by a long-term care facility, PACS, and lab systems. It’s his big, hairy beast and the fragmentation problem in healthcare technology is a real challenge in interoperability, because you’ve got data that’s living in all these different places.

With regard to the business side, there will be demand, but the challenge is how you target that demand to the right entities. If you’re a pharma company, you will have to take it one by one in terms of where you want to get that data and get those capabilities to pull that data, because it’s not like there’s one source and one a one-stop shop.

When I explain interoperability to folks and some of the challenges, I talk about Uber. With Uber, you can see a map and a timing for your ride. Why is that possible? It’s because Uber has integrated with Google and their Google Maps functionality to be able to provide that information. Uber only had to integrate with Google and that’s it. They get everything that they might need.

But that’s not the same thing in healthcare. You can’t go to one single place and get everything you need, even as a patient. Our information is in the EMR, but also in the PCP’s EMR, the hospital’s EMR, in the Walgreens where we got our flu shot last year, and in the urgent care. Being able to source the data from all those different places is something that is going to be really challenging, whether you’re a business or a person.

How does Nashville’s innovation and digital health environment compare to that of Silicon Valley, Route 128, and Austin?

I’m biased. I love Nashville. I tell people that it’s the greatest city on earth. I’m sure lots of folks would disagree with me, but it is a wonderful place that I hope lots of people can at least come and visit, if not move to.

In terms of the entrepreneurial scene and the technology scene, it has really flourished. I’ve only been here five years, so I can’t say that I have a perfect window into everything that has gone on. But a lot of people are focused on making Nashville a wonderful place. There’s a lot of positive energy around technology, innovation, and entrepreneurship. We have a really thriving entrepreneur center, a couple of them actually. We’ve got great programs and accelerators that support these different startups. There are certain people out there who are trying their best to attract investment, attract attention, and build that culture.

We’re a tightknit community in the way that we come together and support each other. I’ve always felt like people are out there willing to help, willing to lend a hand, and meet or introduce you to someone, which I think is really great. That supportive environment has made it a wonderful place to have a business and to live. We’re doing the right things.

It always helps when you have a big name who is drawn to the city, like Oracle. I know there was the announcement about moving the headquarters here. I’m interested to see how that plays out and what it looks like, but even just having that campus here and what they’re doing to build that up and build up the East Bank of Nashville is really special. That drives attention and creates that network effect, because more and more organizations will now take a look at us and say, this is a cool place, we should check it out, we want to be here.

What are the company’s plans for the next few years?

I have to get better as an entrepreneur. That’s the thing that’s top of mind for me. We are three and a half years in. It’s gone well, but I’m still learning. I’m still improving. I probably will be every day of my life. Even right now, I’m trying to figure out how to be a leader and not just a hero. That’s a really important thing on my mind.

In terms of the company, healthcare will always be a focus area, but we definitely want to expand beyond that. Interoperability is a big deal in a lot of industries. You’re talking financial technology, manufacturing, logistics, etc. I was at a conference recently on smart transportation and mobility. There’s a huge need for better interoperability so that the streetlights, stop signs, vehicles, and scooters can all talk to one another. That’s going to be important for a modern transportation infrastructure.

What we want to do at Ashavan is earn a seat at the table in those industries. We want to be a part of bringing about that change and bringing about better interoperability in those areas, because when we can make the best choice the easiest one for those consumers and those companies, we’re going to feel like we’re making a positive contribution to society, and that’s going to be really special.

I've figured it out. At first I was confused but now all is clear. You see, we ARE running the…