I think you are missing the point. When you are dealing with a new type of disease/tumor, most average doctors…

News 6/23/21

Top News

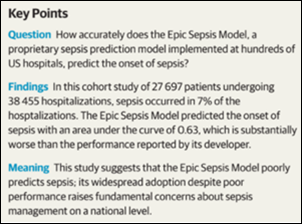

The Epic Sepsis Model predicts sepsis poorly while flooding clinicians with inappropriate alerts, a Michigan Medicine study concludes.

The authors note that while hundreds of hospitals are using the Epic-distributed model, the company has divulged little about its methods or its real-world performance.

They also note that at UM, clinicians would have needed to investigate 109 Epic-flagged patients to find one that required sepsis intervention.

The article warns of “an underbelly of confidential, non-peer-reviewed model performance documents that may not accurately reflect real-world model performance.”

An accompanying JAMA Internal Medicine editorial warns that Epic’s model was developed in just three US health systems six years ago and health systems should validate and recalibrate such models before implementing them. They draw the parallel that just as clinician decision support rules are reviewed by local clinicians before they are offered for use in patient care, local data scientists should evaluate any algorithms that were developed elsewhere.

Reader Comments

From Map Bucks: “Re: pay for remote work. My health IT employer is considering adjusting pay to local conditions for those who work remotely (the company is in an expensive metro area). Does this seem OK?” It’s a complex issue. The black-and-white side of me says that companies should pay based on the job, not where the worker sits while performing it. A Dallas company might not be able to hire someone from the Bay area for what it pays locally, but that candidate always has the option to move to Texas. Companies shouldn’t pay more just because an employee chooses a long commute, a more expensive house, or to live across the state line where it costs more – that seems to be a slight creep toward socialism, as in “you need to give me a raise to perform the same work because our new child is costing us more.” I would also not put it past some employees to fake their residence to earn more, such as borrowing a relative’s New York City address. Perhaps the stickiest issue is reducing compensation for someone who leaves an expensive metro, although that doesn’t make sense to me. My hot take is that the job is worth what it’s worth and the employee is free to live wherever they want but also with the expectation that their voluntary choice doesn’t affect their paycheck.

From D.V. Wormer: “Re: Avaneer. Which problem of interoperability can blockchain really solve?” Dean Wormer, instead of being a downer who undermines the work of roomfuls of vendor marketing people, just mindlessly accept that the US healthcare system lags the civilized world in accessibility, outcomes, and cost only because we don’t use enough AI, blockchain, and robotic process automation (try not to notice that those many countries who outperform us also don’t use it and that the folks touting those technologies are the same ones who sell it). IBM is involved in Avaneer, which isn’t a strong indicator of commitment, and so far the only customers I’ve seen mentioned are also Avaneer investors. Blockchain is a hammer looking for nails that never seem to get pounded, and while healthcare has a ton of inefficiency and lack of interoperability (weren’t government-subsidized EHRs and HIEs supposed to fix those problems?), the historic safe bet is to be skeptical of companies that pre-profess their technology’s ability make it better. I’ve been in health IT enough to skew cynical, so I’ll invite more glass-half-fullers to weigh in. I’ll be as interested as the next person to see hard data from an Avaneer-using health system that saves a ton of money and passes those savings along to patients (if for no other reason, because that has never happened in our profit-motivated system).

Webinars

June 24 (Thursday) 2 ET: “Peer-to-Peer Panel: Creating a Better Healthcare Experience in the Post-Pandemic Era.” Sponsor: Avtex. Presenters: Mike Pietig, VP of healthcare, Avtex; Matt Durski, director of healthcare patient and member experience, Avtex; Patrick Tuttle, COO, Delta Dental of Kansas; Chad Thorpe, care ambassador, DispatchHealth. The live panel will review the findings of a May 2021 survey about which factors are most important to patients and members who are interacting with healthcare organizations. The panel will provide actionable strategies to improve patient and member engagement and retention, recover revenue, and implement solutions that reduce friction across multiple channels to prioritize care and outreach.

June 30 (Wednesday) 1 ET. “From quantity to quality: The new frontier for clinical data.” Sponsor: Intelligent Medical Objects. Presenters: Dale Sanders, chief strategy officer, IMO; John Lee, MD, CMIO, Allegheny Health Network. EHRs generate more healthcare data than ever, but that data is of low quality for secondary uses such as population health, precision medicine, and pandemic management, and its collection burdens clinicians as data entry clerks. The presenters will review ways to reduce clinician EHR burden; describe the importance of standardized, harmonious data; suggest why quality measures strategy needs to be changed; and make the case that clinical data collection as a whole should be re-evaluated.

Previous webinars are on our YouTube channel. Contact Lorre to present your own.

Acquisitions, Funding, Business, and Stock

NextGen Healthcare announces that President and CEO Rusty Frantz will leave under a “mutual separation” agreement that is effective immediately. He has also left the company’s board. Frantz did not indicate the reason for his departure, but he said in a statement that leaving the company will allow him to “put 100% of my focus on my most important priority – my family.” The company has launched a search for his replacement. Frantz took the role in June 2015, with NXGN share price increasing 5% in that time versus the Nasdaq’s 181% gain.

Cleerly, which applies AI to coronary imaging to predict heart attacks, launches itself with a $43 million Series B funding round. Founder and CEO James Min, MD was a professor of radiology and medicine at Weill Cornell Medical College, where the company’s technology was developed.

RCM services vendor Services Solutions Group, formerly the services division of NThrive, renames itself to Savista.

Sales

- Arkansas Pediatric Clinic chooses Emerge data conversion and integration solutions for its migration to Athenahealth.

- FirstLight Home care joins Dina’s digital home care coordination network.

- The Ohio State University Wexner Medical Center will offer Type 2 diabetes patients access to Teladoc Health’s Livongo for Diabetes Program.

People

Industry long-timer Tim Knoll, MBA (PatientSafe Solutions) joins healthcare staff safety technology vendor Strongline as VP of sales.

Glytec hires Nausheen Moulana, MBA, MSEE (Kyruus) as CTO.

Ascend Medical hires Michael Justice, MBA (Trinisys) as CTO.

Meera Kanhouwa, MD, MHA (Deloitte) joins Ernst & Young Global Consulting Services as executive director in digital health. Her experience includes 10 years as a US Army ED physician with deployment during Operation Desert Storm.

Announcements and Implementations

Amazon launches AWS Healthcare Accelerator, a four-week virtual program for 10 startups that will learn about using AWS to develop healthcare solutions.

A new KLAS report on population health management technology vendors finds that Arcadia, Epic, and Innovaccer stand out.

Government and Politics

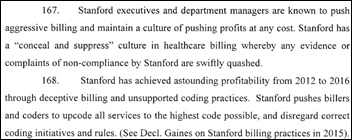

A federal appeals court rejects Stanford Healthcare’s argument in a $500 million Medicare billing fraud case involving records Epic-enabled upcoding and unbundling of charges.The complaint says that Stanford doubled its Medicare revenues without increasing its expenses, which the complaint says could only be done by creative coding.

In Australia’s New South Wales, NSW Health will receive $105 million from the state’s digital services initiative for the first phase of its EHR replacement project, with additional funds budgeted from its COVID-19 relief package to expand telehealth and to improve integration between ambulance services and hospital EDs.

Other

KHN notes that big US health systems are opening medical facilities in other countries, such as Cleveland Clinic spending $1 billion to open a clinic across the street from London’s Buckingham Palace Garden that will offer only profitable elective surgeries and treatments in hopes of attracting American expatriates and rich Europeans. The article questions why those systems, which don’t pay taxes, are allowed to pursue such aggressive international business moves.

Sponsor Updates

- Healthcare Growth Partners advised Medullan on its sale to ZS.

- University of Texas as San Antonio joins Optimum Healthcare IT’s healthcare IT apprenticeship program.

- Premier announces the 2021 winners of its Breakthrough Awards.

- Goliath Technologies offers a free Citrix Health Check.

- KLAS recognizes Arcadia as a leader in market energy and customer experience in its “2021 Population Health Management Overview” report.

- TeleConsult Europe selects enterprise imaging from Agfa HealthCare.

- Azara Healthcare names George McGovern (MedTouch) VP of finance and Charlene Grasso (Cambridge Consultants) director of HR.

- The local news profiles CareSignal’s partnership with Americares and the Greater Hickory Cooperative Christina Ministry to serve vulnerable populations.

- Cerner shares a new client achievement, “South Miami-Dade hospital reaches HIMSS Stage 6, 7 and wins Enterprise Davies Award in same year.”

- Ellkay will exhibit at the virtual AHIP Institute & Expo June 22-24.

The following HIStalk sponsors have been recognized in Black Book’s latest customer satisfaction ranking of financial software solutions:

- Enterprise patient identifier solutions – Experian Health

- Patient payment technology – Waystar

- Revenue recovery & accounts receivables solutions – Change Healthcare

- Enterprise resource planning – Symplr API Healthcare

- Hospital claims management systems – Experian Health

Blog Posts

- Scoring well in quality measures has just become more difficult (AdvancedMD)

- KLAS Research: Arcadia Stands Out Among Peers in Delivering Comprehensive Population Health Management Out-Of-The-Box Functionality (Arcadia)

- Glassdoor Names Jeremy Schwach a Top CEO (Bluetree)

- Leading Through Change in Healthcare Technology Management (CereCore)

- Want to Succeed at Value-Based Care? Launch a Chronic Care Management Program (ChartSpan)

- The Key to Denial Management in Healthcare: Intervene on the Front End (RCxRules)

- Childbirth: A Mother’s Joy and Too Many Others’ Nightmare (Obix Perinatal Data System, developed by Clinical Computer Systems)

- Campus Update: Immersing Ourselves in Local Art (CoverMyMeds)

- Learn How Analytics Can Help Pinpoint Length of Stay Opportunities (Dimensional Insight)

- Improving Patient Safety Series: Data Truncation and Special Characters (EClinicalWorks)

Contacts

Mr. H, Lorre, Jenn, Dr. Jayne.

Get HIStalk updates.

Send news or rumors.

Contact us.

Re: Map Bucks comment about paying telecommuters based on salaries in your city would be a logistical nightmare to manage. I took my company (IT Consulting, Software sales and support) fully remote 7 years ago and have employees currently residing in 4 different states. My position salaries are based on the position not the location. It has served me well. The bigger challenge as the employer is managing the labor laws in different states.We have a fantastic payroll vendor who takes care of all my tax reporting, we had to obtain employer UI numbers for all the states. The biggest challenge is unemployment insurance. I have gone through phases where I’ve had to have different policies for each state, currently one of my states offers to insure other states through a partnership with a bigger insurer.

As an employer, I hope the fear many employers have had that their employees will be less productive remotely has been proven false for the most part over the past year. Certainly I’ve had a couple of employees through the years who took advantage (fired an employee via video conf at 9 am who was still drunk from an all night rager), but for the most part, employees work harder (no chit chat with coworkers) and longer hours than they did when in the office.

Re: Epic Sepsis Model

The real problem is not the performance of the model per se – but the lack of transparency regarding model development and evaluation process. Looks like a large number of health systems using this model decided to do so with eyes wide shut just because the model was available “out of the box” with Epic and easy to turn on.

What is most surprising is that despite claiming that its Cosmos database has 100 million patient records, Epic did not do a more comprehensive training and testing of its model on that data.

Epic needs to issue a patient safety “recall” of this model asap and should commit to updating its model and submitting it for peer review before turning it back on again.

Epic is the one that employs legions of software developers and data scientists. Epic’s customers are the ones that have comparatively limited capacity for software development and data science since the customers have to actually practice medicine and provide care, sometimes in very financially constrained settings. Epic knows that, yet they still put this out there as a closed, poorly performing algorithm to switch on. It is not just bad design or Epic’s usual arrogance about thinking they know better. Epic has a responsibility to practice data science ethically and they failed to do so.

For the health systems that do have data science folks, implementing and testing these models represents a significant time overhead of; implementation, data gathering and analysis/testing only to discover that flipping a coin would be more accurate approach. The burden on the providers having to deal with the noise along with their other duties equates to the burnout systems are laser focused on limiting. This time sink equates to A LOT of wasted paid hours. I also believe that systems need to implement 4 of these models to get honor roll status, if this is the case I would hope to see that changed up.

False positives are one thing, but the false negatives negatives were more troubling. And, was their reliance on the system that may have been overoptimistic? The RCA on this should include something along the lines of an Ishikawa model. I would suggest that the backbone elements would be:

-Development

-Verification and Validation

-QMS compliance

-Requirements

-Data

-Users

-System

-Implementation

-Warning systems post rollout

There are probably dozens of sub bones off that backbone…

The evaluation should be independent but inclusive and also involve at least two deployments as well as being public with the results.

Of course, the study should be repeated at least once to confirm the results.

A long time ago, an important vendor of ours released a much-hyped add-on. The core product was outstanding, so we had high hopes.

However there were danger signs too. The add-on had gone through an extremely long development cycle and was repeatedly delayed. Customer consensus was that the add-on was “unusable” until version 3, and even then it was “clunky”.

As a technical administrator for this system, I felt the need to step in and speak against adopting the add-on. It was clearly not up to standard and possibly never would be. Unless you had really smart and capable users, willing to adapt to the clunky add-on, failure and disappointment were inevitable. Yet our users were used to the high-quality core product; they were going to assume that any add-ons would perform just as well.

This Epic Sepsis Predictor, sounds very much like my past experience. And when you add in already widespread alert/alarm fatigue in clinical settings? It just feels like piling problems on more problems.

Customers have complete transparency into the sepsis model. The full mathematical formula and model inputs are available to administrators on their systems. Accuracy measurements and information on model training are also on Epic’s UserWeb, which is available to our customers.

We’re all friends: post that information here and I’ll shut up about transparency.

Sorry Sam,

But at this point, there is a need to be more transparent. I am not going to hammer someone who has tried to implement an AI solution, but you have to be transparent or we are in the IBM Watson Oncology situation. Be transparent and let data scientists evaluate the solution or do a Patient Safety Recall. Yah, if you are alerting false positive far more than actual positive, I consider that a patient safety situation.

There is no refuting of the paper, so unless you are refuting the paper you should be responding with data and facts, not proprietary affirmations.

This isn’t an attack on Epic. I need AI to work, I need big data to enhance our medicine, but we must accept that there will be failures if we are to grow. Giving us the party line isn’t acceptable.

Why did this fail?

Sorry Dr. Sam Butler,

I checked with four people who are ‘customers’, they don’t have access to the information you indicated. Maybe there is a specific place they should go to see the algorithm, methods, etc, but they couldn’t get there on UserWeb.

Maybe you meant something else? Somewhere else?

If you want AI to work, if you want analytics to work, or ML, IoT, SDoH, first step is to use an orchestrated data platform that allows for “real” big data applications to work, that hyperscales in multiple areas. Build your analytics and deep tech on top of this platform. Businesses outside of healthcare and many in healthcare have been doing this for years. And even though Epic is the big dog on the porch for EMR’s, they’re the pups under the porch in the analytic world. Build your analytics strategy or have the well know names help you build your strategy.

I don’t have access to the sepsis algorithm study, and the abstract is confusing.

The authors state that the algorithm is to predict that a patient might develop sepsis, but then write about identifying patients with sepsis.

Did the algorithm actually predict that 33% of the patients who developed sepsis may be at risk with advanced warning that would have prevented sepsis? Maybe someone with access to the article can clarify what was measured and how.

You are on a very key point. We did a POC a while back and were taken aback by how other modelers discussed their results. Our model was specifically trained to predict a diagnosis of sepsis hours in advance, with the idea that it might drive interventions. “Predicting” that a patient has undiagnosed sepsis in the moment is… uh… not valuable in our opinion.

By the way, we achieved AUC above 0.8, but did not commercialize the model for various reasons. Read about it here: https://www.researchgate.net/publication/321417682_Predicting_Severe_Sepsis_Using_Text_from_the_Electronic_Health_Record

Maybe EPIC should have called us (har har)!

Medical AI/ML algorithms developed by technology or other industry vendors (or anyone, for that matter) should be required to pass the same research-level testing and validation rigor that any other medical protocol would undergo, and the findings should be peer-reviewed and published before being deployed.

If you step outside the hyped world of AI/ML, a hospital system would not implement a new clinical protocol (for example, if this were a written risk-stratification model for sepsis) without it first being testing, validated, published and even then they usually want to wait for the Professional Societies to back the protocol before widely accepting and integrating it into practice. Please explain then why hospitals are just blindly accepting these AI/ML algorithms, when there is already a standard for approving clinical decision-making tools?

I suspect a large part of the issue here is that there is still not enough medical involvement/integration into the IT/IS departments at many hospitals. Their governance models still view IT and the EHR as a “cost center” and not a “strategic asset” to the organization, and think the enhancements that are being added during EHR upgrades are all functionality updates, when in fact they are slipping more and more clinical content and tools into the upgrades.

Coming from the overhyped world of AI/ML, I’d say HITPM is on a good point, but I would tease apart validation of the model and validation of protocol. No model will be 100% accurate, so validating a model comes down to “sufficiently accurate” (trading off precision and recall) and some sort of explainability. In our work on sepsis models, we achieved AOC over 0.8 with the ability to see precisely which parts of the medical record led to the conclusion. (See https://www.researchgate.net/publication/321417682_Predicting_Severe_Sepsis_Using_Text_from_the_Electronic_Health_Record) We did not commercialize it, not because it was invalid but because it needed to drive a clinical protocol that added value.

And that’s the issue. First, practitioners intuitive sense is really good… so any model has to find marginal incremental cases that they’d miss. Second, you need to design, validate, and implement a clinical protocol for what to do when the model alarms. This has a poor ROI.

And it’s worse when you look at models for a host of acute conditions. We could easily have created additional models for renal failure, etc. But each one would trigger a separate clinical protocol… each of which need to be designed, validated, and implemented.

Cerner has had predictive models for a host of conditions for years. (Their sepsis model is free!) But they are infrequently used because of the above.