Great, thanks for getting "we are, we are, oh hoh, oh hoh" stuck in my head again: https://www.youtube.com/watch?v=K5LiUrezV6k

A Machine Learning Primer for Clinicians–Part 1

Alexander Scarlat, MD is a physician and data scientist, board-certified in anesthesiology with a degree in computer sciences. He has a keen interest in machine learning applications in healthcare. He welcomes feedback on this series at drscarlat@gmail.com.

AI State of the Art in 2018

Near human or super-human performance:

- Image classification

- Speech recognition

- Handwriting transcription

- Machine translation

- Text-to-speech conversion

- Autonomous driving

- Digital assistants capable of conversation

- Go and chess games

- Music, picture, and text generation

Considering all the above — AI/ML (machine learning), predictive analytics, computer vision, text and speech analysis — you may wonder:

How can a machine possibly learn?!

As a physician with a degree in CS and curious about ML, I took the ML Stanford/Coursera course by Andrew Ng. It was a painful, but at the same time an immensely pleasurable educational experience. Painful because of the non-trivial math involved. Immensely pleasurable because I’ve finally understood how a machine actually learns.

If you are a clinician who is interested in AI / ML but short on math / programming skills or time, I will try to clarify in a series of short articles — under the gracious auspices of HIStalk — what I have learned from my short personal journey in ML. You can check some of my ML projects here.

I promise that no math or programming are required.

Rules-Based Systems

The ancient predecessors of ML are rules-based systems. They are easy to explain to humans:

- IF the blood pressure is between normal and normal +/- 25 percent

- AND the heart rate is between normal and normal + 27 percent

- AND the urinary output is between normal and normal – 43 percent

- AND / OR etc.

- THEN consider septic shock as part of the differential diagnosis.

The problem with these systems is that they are time-consuming, error-prone, difficult and expensive to build and test, and do not perform well in real life.

Rules-based systems also do not adapt to new situations that the model has never seen.

Even when rules-based systems predict something, it is based on a human-derived rule, on a human’s (limited?) understanding of the problem and how well that human represented the restrictions in the rules-based system.

One can argue about the statistical validation that is behind each and every parameter in the above short example rule. You can imagine what will happen with a truly big, complex system with thousands or millions of rules.

Rules-based systems are founded on a delicate and very brittle process that doesn’t scale well to complex medical problems.

ML Definitions

Two definitions of machine learning are widely used:

- “The field of study that gives computers the ability to learn without being explicitly programmed.” (Arthur Samuel).

- “A computer program is said to learn from experience (E) with respect to some class of tasks (T) and performance measure (P) — IF its performance at tasks in T, as measured by P, improves with experience E.” (Tom Mitchell).

Rules-based systems, therefore are by definition NOT an ML model. They are explicitly programmed according to some fixed, hard-wired set of finite rules

With any model, ML or not ML, or any other common sense approach to a task, one MUST measure the model performance. How good are the model predictions when compared to real life? The distance between the model predictions and the real-life data is being measured with a metric, such as accuracy or mean-squared error.

A true ML model MUST learn with each and every new experience and improve its performance with each learning step while using an optimization and loss function to calibrate its own model weights. Monitoring and fine-tuning the learning process is an important part of training a ML algorithm.

What’s the Difference Between ML and Statistics?

While ML and statistics share a similar background and use similar terms and basic methods like accuracy, precision, recall, etc., there is a heated debate about the differences between the two. The best answer I found is the one in Francois Chollet’s excellent book “Deep Learning with Python.”

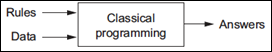

Imagine Data going into a black box, which uses Rules (classical statistical programming) and then predicts Answers:

One provides Statistics, the Data, and the Rules. Statistics will predict the Answers.

ML takes a different approach:

One provides ML, the Data, and the Answers. ML will return the Rules learned.

The last figure depicts only the training / learning phase of ML known as FIT – the model fits to the experiences learned – while learning the Rules.

Then one can use these machine learned Rules with new Data, never seen by the model before, to PREDICT Answers.

Fit / Predict are the basics of a ML model life cycle: the model learns (or train / fit) and then it predicts on new Data.

Why is ML Better than Traditional Statistics for Some Tasks?

There are numerous examples where there are no statistical models available: on-line multi-lingual translation, voice recognition, or identifying melanoma in a series of photos better than a group of dermatologists. All are ML-based models, with some theoretical foundations in Statistics.

ML has a higher capacity to represent complex, multi-dimensional problems. A model, be it statistical or ML, has inherent, limited, problem-representation capabilities. Think about predicting inpatient mortality based on only one parameter, such as age. Such a model will quickly achieve a certain performance ceiling it cannot possibly overpass, as it is limited in its capabilities to represent the true complexity involved in this type of prediction. The mortality prediction problem is much more complicated than considering only age.

On the other hand, a model that takes into consideration 10,000 parameters when predicting mortality (diagnosis at admission, procedures, lab, imaging, pathology results, medications, consultations, etc.) has a theoretically much higher capacity to better represent the problem complexity, the numerous interrelations that may exist within the data, non-linear, complex relation and such. ML deals with multivariate, multi-dimensional complex issues better than statistics.

ML model predictions are not bound by the human understanding of a problem or the human decision to use a specific model in a specific situation. One can test 20 ML models with thousands of dimensions each on the same problem and pick the top five to create an ensemble of models. Using this architecture allows several mediocre-performing models to achieve a genius level just by combining their individual, non-stellar predictions. While it will be difficult for a human to understand the reasoning of such an ensemble of models, it may still outperform and beat humans by a large margin. Statistics was never meant to deal with this kind of challenge.

Statistical models do not scale well to the billions of rows of data currently available and used for analysis.

Statistical models can’t work when there are no Rules. ML models can – it’s called unsupervised learning. For example, segment a patient population into five groups. Which groups, you may ask? What are their specifications (a.k.a. Rules)? ML magic: even as we don’t know the specs of these five groups, an algorithm can still segment the patient population and then tell us about these five groups’ specs.

Why the Recent Increased Interest in AI/ML?

The recent increased interest in AI/ML is attributed to several factors:

- Improved algorithms derived from the last decade progress in the math foundations of ML

- Better hardware, specifically designed for ML needs, based on the video gaming industry GPU (graphic processor unit)

- Huge quantity of data available as a playground for nascent data scientists

- Capability to transfer learning and reuse models trained by others for different purposes

- Most importantly, having all the above as free, open source while being supported by a great users’ community

Articles Structure

I plan this structure for upcoming articles in this series:

- The task or problem to solve

- Model(s) usually employed for this type of problem

- How the model learns (fit) and predicts

- A baseline, sanity check metric against which model is trying to improve

- Model(s) performance on task

- Applications in medicine, existing and tentative speculations on how the model can be applied in medicine

In Upcoming Articles

- Supervised vs. unsupervised ML

- How to prep the data before feeding the model

- Anatomy of a ML algorithm

- How a machine actually learns

- Controlling the learning process

- Measuring a ML model performance

- Regression to arbitrary values with single and multiple variables (e.g. LOS, costs)

- Classification to binary classes (yes/no) and to multiple classes (discharged home, discharged to rehab, died in hospital, transferred to ICU, etc.

- Anomaly detection: multiple parameters, each one may be within normal range (temperature, saturation, heart rate, lactic acid, leucocytes, urinary output, etc.) , but taken together, a certain combination may be detected as abnormal – predict patients in risk of deterioration vs. those ready for discharge

- Recommender system: next clinical step (lab, imaging, med, procedure) to consider in order to reach the best outcome (LOS, mortality, costs, readmission rate)

- Computer vision: melanoma detection in photos, lung cancer / pneumonia detection in chest X-ray and CT scan images, and histopathology slides classification to diagnosis

- Time sequence classification and prediction – predicting mortality or LOS hourly, with a model that considers the order of the sequence of events, not just the events themselves

Great article and most likely the foundation for future ML learnings. It will be helpful to continue to update this article as new articles are posted on different topics, eg. “Supervised vs. unsupervised ML”.

The very best description of ML vs statistics and of ML in general is found here! (Borrowed from Chollet but shared to an audience who would never be exposed to his work). Great work!

Very interesting.

From my point of view, the question is, how to incorporate ML objects in presentation platforms like qlikview for Clinical Decision support