Readers Write: Healthcare Technology To-Do List: Make Data Valuable for Providers

Healthcare Technology To-Do List: Make Data Valuable for Providers

By Kevin Coloton

Kevin Coloton, MPT, MBA is founder and CEO of Curation Health of Annapolis, MD.

Following my time at HIMSS ’24 and RISE National, I’ve noticed that the healthcare industry considers the maturity of health data exchange as a mission accomplished, when in reality, we are drowning providers in data that’s not usable.

Health plans and other enterprises previously had tons of data that they kept locked away. Now they are touting that they have opened the valve and data is flowing freely to the electronic health record (EHR) for health systems and providers. The problem is that healthcare providers are now drowning in data, making it almost useless. They’ve been given the full clinical encyclopedia when all they need is the CliffsNotes summary of the patient’s priorities.

There’s a huge difference between clinical data exchange and clinical insight delivery. When we step back and look at the problems that we are attempting to solve, it is discerning which data insights are actionable to improve patient care encounters, value-based care (VBC) performance, and health outcomes. The synthesis of the massive data set into actionable priorities is what’s needed. For example, knowing the top three data insights that will be most valuable for each patient during the provider’s 10-minute care encounter.

We need to help healthcare providers contextualize data for each patient. A technology “clinical insight” layer is needed within the EHR to deliver the most relevant and impactful insights from the data set to maximize provider engagement. This efficient use of actionable data can superpower provider workflows by reducing the work load of reviewing a full library of patient data and enhancing the value of time spent with patients.

Managing thousands of patients across an entire calendar year requires an overwhelming amount of data. Other industries, such as retail and ecommerce, have matured faster to accommodate the data than healthcare because we are early on our journey around what’s important for technology integrations. As a healthcare technology industry, we’re still in this era of what is best described as data maximalism, getting so excited about the potential value of a massive data set, which is further complicated by having health plan data being sent to provider EHRs and dumping the data into a giant repositories like data lakes.

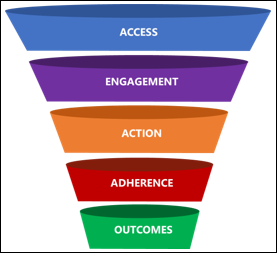

From an operational standpoint, healthcare decision makers and information technology leaders are challenged with managing the tsunami of patient data, which is often just pushed directly into provider workflows. However, we must focus our efforts on delivering the highest impact data at the point of care. Those curated data points will be the most important items to focus on during a patient’s care encounter, particularly in a VBC model. When physicians are using great insight and act on it accordingly and compliantly, the patient receives more holistic care, the provider gets an accurate representation of the acuity of their patient, and reimbursement is appropriate based on the clinical risks that are associated with the patient.

To succeed in healthcare’s VBC environment, we must shift gears to a data minimalism approach. This is a strategy with the objective of synthesizing massive amounts of data and ultimately delivering the minimum amount of data required to benefit the frontline healthcare professional in managing a patient’s health. The approach rewards providers with more time for direct patient care, which is the most constrained element of the healthcare equation.

To achieve this result, EHR technology integrations can be deployed that utilize artificial intelligence (AI) and offer relief to clinical and administrative teams for risk-based documentation and coding activities. When looking for the best-fit technology provider, healthcare teams must understand that the goal is not to add more clicks, but to superpower the humans who are already doing their daily jobs.

AI and has made leaps and bounds in healthcare to scale the impact of data analysis. AI-powered technology can transform data into user-friendly formats and then analyze that data against established clinical and quality rules to identify both known and previously undiscovered patient needs. For healthcare provider groups and health insurers that are looking to gain actionable insights from their data sets, they should seek a technology platform that can harmonize patient data into actionable insights so that the information can flow both ways, from a plan to a provider and from a provider to a plan. That way, providers can enhance their partnership with payer organizations to better manage and optimize patient care.

The main takeaway? Using technology to curate data is not intended to replace the human expert in the equation. It should superpower that human expert to achieve scalability and better outcomes. By allowing providers to practice at the top of their license and engage more with the patient because they’re not logging into their records to flip through pages and pages of lab results, medication lists, past visit notes, and specialty referrals, we are succeeding in delivering efficient, effective, and high-quality care. Providers should be able to leverage a curated data set from the EHR to organize information to make it actionable and amplify their true expertise.

It is a screenshot. In some cases they are useful, in others - not so much. In either case we…