Sounds reasonable, until you look at the Silicon Valley experience. Silicon Valley grew like a weed precisely because employees could…

Readers Write 9/30/10

Submit your article of up to 500 words in length, subject to editing for clarity and brevity (please note: I run only original articles that have not appeared on any Web site or in any publication and I can’t use anything that looks like a commercial pitch). I’ll use a phony name for you unless you tell me otherwise. Thanks for sharing!

"Granularity" — A Detailed Analysis

By Robert Lafsky, MD

“Granular” is turning into a buzzword. And that’s not a good thing.

It was a perfectly respectable, albeit not very useful term in the analog days, referring usually to a physical material composed of — you know, little granules. You’ll actually see it used sometimes as a descriptive term in pathology and endoscopy reports, and in general use it describes some thing’s particular type of grainy texture. But then, of course, computer people got hold of it and gave it a much more specific, albeit metaphorical meaning, which I’ll get to in a minute.

Recently, writers in the mainstream media, with their ears always pressed to the ground and desperate for novelty, have picked up on this word and are starting to use it to describe more abstract things, in a way that fails to grasp the IT meaning at all. For instance, the other day political pundit Michael Gerson described a Karl Rove critique of Christine O’Donnell as “granular and well informed.” If you substituted “detailed” for “granular” in that sentence, you wouldn’t have changed the meaning a bit.

But IT people don’t use “granular” to mean just “detailed.” Hard copy or scanned documents can, of course, be very detailed. I remember a couple of old docs from my training days who would sit with pen and paper and do beautiful two- or three-page, single-spaced handwritten reports on their patients with every bit of the history, physical, and labs on them. It was impressive effort, very detailed, but even if you found those reports now and scanned them into your EMR, the information in them wouldn’t be granular.

No, for a computer, detail is necessary for granularity, but it’s far from sufficient. The computer has to be able to do something with the details so that it can store them in an orderly way and then use them for searches and reports. That sort of thing, of course, is the “use” that at least has the potential to be “meaningful.”

So if, say, a particular drug for hypertension is found to be dangerous for everybody over 60 with diabetes, I don’t have to go manually through a thousand records. They are recorded in a yes-or-no fashion in a database. I can query my system and get an immediate list of all my patients who meet those criteria, with their addresses and phone numbers.

That’s granularity. Facts have to be detailed, but in a fashion where computers can take advantage of them.

Maybe this is obvious to the IT business readers out there, but I sure spend a lot of time in the doctor’s lounge painstakingly explaining this to medical colleagues. And granularity is at the heart of all the arguing about workflow issues in EMRs, as well as interoperability and the coherence of automated reports that rages in the comment sections of this website and elsewhere.

I can’t offer a resolution of any of these arguments. But to get anywhere, we need commonly defined terms, and granularity is a pretty useful one. General media people out there, if you mean “detailed,” say “detailed.” Leave “granular” for those that really need it.

Robert Lafsky is a gastroenterologist in Lansdowne, VA.

A HCIT System Architecture for Cloud Computing

By Mark Moffitt

Note: This article uses a fictional story about Google and Meditech as a backdrop to describe a healthcare IT (HCIT) system architecture for cloud computing.

(Oct. 1, 2020) Today marks the eighth anniversary of Google’s purchase of an obscure private company know then as Meditech that marked the beginning of the transformation of the HCIT industry into what it looks like today.

At the time, the purchase shocked everyone. Over the years, Meditech had repeatedly rejected any notion of a buyout by another company.Then Google offered $1.5 billion, more than a 50% premium on the estimated valuation of the company. The offer, it turns out, was too good to turn down. Neil Pappalardo of Meditech walked away with a $400 million payout. Google’s market cap at the time: $168 billion.

The Vision

Google’s vision for the future of HCIT was straightforward: provide all IT services to healthcare system as a cloud computing service at a price much lower than market rates as a strategy to capture 60% of the worldwide market by 2020. Google’s service included applications, data management, and integration. Google architected the system from the ground up for cloud computing, so they were able to offer the service at a much lower price while realizing higher margins than competitors.

Google bought Meditech for its customer base and use case models that had been hardened by use over many years. Google took Meditech’s functional specs and enhanced and implemented them in a new architecture. In addition, Google purchased several other HCIT vendors and integrated them to provide a total HCIT solution to customers.

Data Storage

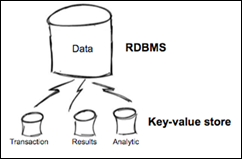

Google did not use a relational database management system (RDBMS) as was common at the time, and instead used schema-less, key-value, non-relational, distributed data stores, aka as NoSQL.

RDBMS scale well, but usually only when that scaling happens on a single server node. When the capacity of that single node is reached, you need to scale out and distribute the load across multiple server nodes. This is when the complexity of relational databases starts to bump up against their potential to scale.

Goggle’s key-value data store model improves scalability by eliminating the need to join data from multiple tables. As a result, commodity multi-core server hardware can be used that are far less expensive than high-end multi-CPU servers and expensive SANs. The overall reduction in cost due to savings in database license fees and maintenance and hardware is around 70% when compared to using a RDBMS. Database sharding and the “shared-nothing” approach is ideal for managing large amounts of data at a low cost.

Three Data Types

Another concept introduced by Google was segregating data into three buckets — transaction data, results data, and analytic data — and managing each differently. Competitors at the time combined all three into one big, complex RDBMS.

Transaction data — what was ordered, when and by whom, what tests were performed, or what meds were given to a patient — are persisted to a transaction data store. At some point, all of the transactions related to a patient encounter are collected in a single electronic medical record file and compressed to about 10% of original size. Results are also contained in this file but not images, due to size, as was the case with the original paper medical record and film file.

The compressed medical record file provides an interactive view of the patient’s encounter to satisfy legal and payment inquiries. These electronic medical record files are stored securely in the cloud. Records are never transferred between organization; rather, access is authorized and the record viewed from the cloud.

Data is purged from the transaction data store once the electronic medical record file is created. The transaction data store remains a constant size and, as a result, it retrieves data faster and is easier to manage than if the transaction data store grew in size. Transaction data is concurrently stored in a separate analytic data store and is not purged.

Google partnered with several business intelligence vendors to offer advanced analytical services from the cloud using the customer’s analytic data store.

Results such as images, labs, reports, and waveforms are also stored in schema-less, key-value, non-relational, distributed data stores.

The three buckets — transaction data, results data, and analytic data — are each stored across multiple commodity server hardware using a “shared-nothing” approach. Scaling any individual bucket for a customer is almost as simple as adding server hardware.

Integration

Google used a derivative of their search engine technology to integrate a patient’s records and results across multiple providers and systems.

Application Development Framework

Google used an application development framework that was easier to build and deploy software. In a RDBMS, application changes and database schema changes have to be managed as one complicated change unit. Google’s key-value data store allows the application to store virtually any structure it wants in a data element. Application changes can be made independent of the database.

In addition, Google used a scripting language for code that changes most often — user-facing code. Both of these features combined to make software development easier and allowed applications to iterate faster. In software development, the rate of innovation is directly related to the rate of iteration.

Mark Moffitt, MBA, BSEE is the former CIO at GSMC in Texas and is working as an independent consultant while he searches for his next opportunity.

Software Upgrades – To Be or Not to Be? That is the Question

By Ron Olsen

The day your facility installs a new piece of software, you rarely think about the upgrades that will inevitably come later. You probably ask if such upgrades are included in the maintenance agreement, and then shuffle away that information for future use … or not.

Many times an upgrade is more than just a requirement from the vendor — it’s a welcome relief that offers bug fixes, provides additional functionality, and many times, increases productivity, which equates to money-saving. Hey, any time we humble IT/IS guys and girls can do something to keep the CIO happy, we’ve got to jump on it! That’s what IT should be all about — increasing the ability to save money and/or help other departments increase revenue streams.

Most of us have been caught in the XP vs. Vista vs. Windows 7 debate. The old adage, ‘If it ain’t broke, don’t fix it’ seems applicable here. XP works fine. Vista is, well, Vista. Windows 7 has generated a lot of hype. Windows 7 offers many enhancements, but if your organization’s PCs aren’t up to it, the new bells and whistles aren’t available. To get the full feel of the new Internet Explorer 9 beta release, Windows 7 is now required.

This is just one example of how an upgrade is never a simple, single-issue vote. There are dozens of interrelated concerns that an IT department must evaluate before pulling the trigger on a software upgrade.

And then the software compatibility issues. How many times have we heard from a vendor, “It’s not certified for (fill in any number of OS versions) yet!” This causes a push me – pull you effect. Some vendors are pushing you to move forward, and others you have to pull along with you.

Things to consider before upgrading your software:

1) Can you adjust your current processes to take advantage of new functionality? Many times we take an upgrade and claim there is not enough time to do a full evaluation prior to going live. Then, we certainly do not have time to go back and look again. This could actually cost your company money in the long run, instead of delivering the benefits of a well-planned project.

2) Downtime can be a deal-breaker for upgrades. No department ever wants to experience downtime unless it’s unavoidable. How will each department test the new upgrade? Do they have a full test system to work with? If all of the issues are thought out beforehand and these questions answered, upgrading shouldn’t be that painful.

3) Does hardware need replaced? Could this be a great opportunity to replace some old PCs and servers? Is this the catalyst that moves your facility to server virtualization …finally?!

4) What vendor software (enterprise forms management, ECM/EDM, etc.) will need to be upgraded simultaneously?

Thoughtful software and hardware upgrades are usually embraced by end users and the C-level alike. Personnel get new PCs that increase productivity, which keeps the Powers that Be happy once they’ve overcome the initial sticker shock. Just the idea of new PCs gets most staff members feeling like the hospital is moving forward technologically.

Server virtualization condenses the physical footprint of the server room, decreases power and cooling costs, and in most cases, reduces server administrative duties. And with your software running faster with full functionality from vendors’ latest compatible releases, IT/IS will (hopefully) get less end user complaints. Hey, it sounds good in theory!

Just make sure you plan well in advance; get buy-in from department heads, super users and (if you’re lucky) an enthusiastic executive; and communicate openly with vendors and you’ll be good to go.

Ron Olsen is a product specialist at Access.

Many software upgrades are downgrades, in actuality, and oft cause havoc and mayhem, as evidenced by your report about the Trinity upgrade of several months ago, causing misidentification.

Past formats of the records are altered by upgrades, in violation of medical legal requirements. The record ceases to appear as it did to the doctor at the time of care.

Mark Moffitt’s vision for what could be with the marriage of Google and Meditech is simply brilliant. He has nailed the value of the business model as much as he has nailed the benefits of an entirely new HCIT architecture.

As he has identified, the potential for integration among a variety of 3rd party solutions, including BI, PHR, Telehealth, etc. Also, his concepts for data storage, retrieval and sharing are designed for the ultimate in scale and performance.

Google needs to acquire Mark’s talent, before someone else snaps him up.

Very interesting…two articles basically saying the same thing from very different perspectives. Granualrity & Upgrades make the same very important point.

That is: The devil is always in the details!

Suzy, the computer system you are using right now is the result of countless software upgrades. Feel free to toss it out of your cave.

Dr. Lafsky said: “The computer has to be able to do something with the details so that it can store them in an orderly way and then use them for searches and reports. That sort of thing, of course, is the ‘use’ that at least has the potential to be ‘meaningful’.”

Truism, yet the 2010 EMRs are deficient and inferior in usability. Go figure, make meaningful use of devices that are not usable without workarounds.

The work arounds are more meaningfully useful than the devices.

“Data is purged from the transaction data store once the electronic medical record file is created. The transaction data store remains a constant size and, as a result, it retrieves data faster and is easier to manage than if the transaction data store grew in size. Transaction data is concurrently stored in a separate analytic data store and is not purged.”

I had to smile when I read this because it’s the exact opposite of the current MEDITECH Advanced Technology today, where in the new chronological (“Append-Only”) database, the database continues to grow in size as new activity (e.g. an edit) on an existing record creates a new section on that record. Makes for a nice audit trail though…

Still, a very interesting read. Kudos to Mark’s creativity!

Maybe, there is something to this… Just for fun, I checked and the domains MediCloud, Medi-Cloud, GreenCloud and Green-Cloud were already taken 🙂

Why should Google buy Meditech when they get involved in more lucrative activities with much better margins?

http://www.cringely.com/2010/09/googles-pound-of-flesh/